The Advent of Hypersonic Tunnel and Aeroballistic Facilities

John V. Becker at Langley led the way in the development of conventional hypersonic wind tunnels. He built America’s first hypersonic wind tunnel in 1947, with an 11-inch test section and the capability of Mach 6.9 flow. To T. A. Heppenheimer, it is "a major advance in hypersonics,” because Becker had built the discipline’s first research instrument.[582] Becker and Eugene S. Love followed that success with their design of the 20-Inch Hypersonic Tunnel in 1958. Becker, Love, and their colleagues used the tunnel for the investigation of heat transfer, pressure,

and forces acting on inlets and complete models at Mach 6. The facility featured an induction drive system that ran for approximately 15 minutes in a nonreturn circuit operating at 220-550 psia (pounds-force per square inch absolute).[583]

The need for higher Mach numbers led to tunnels that did not rely upon the creation of a flow of air by fans. A counterflow tunnel featured a gun that fired a model into a continual onrushing stream of gas or air, which was an effective tool for supersonic and hypersonic testing. An impulse wind tunnel created high temperature and pressure in a test gas through an explosive release of energy. That expanded gas burst through a nozzle at hypersonic speeds and over a model in the test section in milliseconds. The two types of impulse tunnels—hotshot and shock—introduced the test gas differently and were important steps in reaching ever-higher speeds, but NASA required even faster tunnels.[584]

The companion to a hotshot tunnel was an arc-jet facility, which was capable of evaluating spacecraft heat shield materials under the extreme heat of planetary reentry. An electric arc preheated the test gas in the stilling chamber upstream of the nozzle to temperatures of 10,000-20,000 °F. Injected under pressure into the nozzle, the heated gas created a flow that was sustainable for several minutes at low- density numbers and supersonic Mach numbers. The electric arc required over 100,000 kilowatts of power. Unlike the hotshot, the arc-jet could operate continually.[585]

NASA combined these different types of nontraditional tunnels into the Ames Hypersonic Ballistic Range Complex in the 1960s.[586] The Ames Vertical Gun Range (1964) simulated planetary impact with various modellaunching guns. Ames researchers used the Hypervelocity Free-Flight Aerodynamic Facility (1965) to examine the aerodynamic characteristics of atmospheric entry and hypervelocity vehicle configurations. The research programs investigated Earth atmosphere entry (Mercury, Gemini, Apollo,

and Shuttle), planetary entry (Viking, Pioneer-Venus, Galileo, and Mars Science Lab), supersonic and hypersonic flight (X-15), aerobraking configurations, and scramjet propulsion studies. The Electric Arc Shock Tube (1966) enabled the investigation of the effects of radiation and ionization that occurred during high-velocity atmospheric entries. The shock tube fired a gaseous bullet at a light-gas gun, which fired a small model into the onrushing gas.[587]

The NACA also investigated the use of test gases other than air. Designed by Antonio Ferri, Macon C. Ellis, and Clinton E. Brown, the Gas Dynamics Laboratory at Langley became operational in 1951. One facility was a high-pressure shock tube consisting of a constant area tube 3.75 inches in diameter, a 20-inch test section, a 14-foot-long high – pressure chamber, and 70-foot-long low-pressure section. The induction drive system consisted of a central 300-psi tank farm that provided heated fluid flow at a maximum speed of Mach 8 in a nonreturn circuit at a pressure of 20 atmospheres. Langley researchers investigated aerodynamic heating and fluid mechanical problems at speeds above the capability of conventional supersonic wind tunnels to simulate hypersonic and space-reentry conditions. For the space program, NASA used pure nitrogen and helium instead of heated air as the test medium to simulate reentry speeds.[588]

NASA built the similar Ames Thermal Protection Laboratory in the early 1960s to solve reentry materials problems for a new generation of craft, whether designed for Earth reentry or the penetration of the atmospheres of the outer planets. A central bank of 10 test cells provided the pressurized flow. Specifically, the Thermal Protection Laboratory found solutions for many vexing heat shield problems associated with the Space Shuttle, interplanetary probes, and intercontinental ballistic missiles.

Called the "suicidal wind tunnel” by Donald D. Baals and William R. Corliss because it was self-destructive, the Ames Voitenko Compressor was the only method for replicating the extreme velocities required for the design of interplanetary space probes. It was based on the Voitenko

|

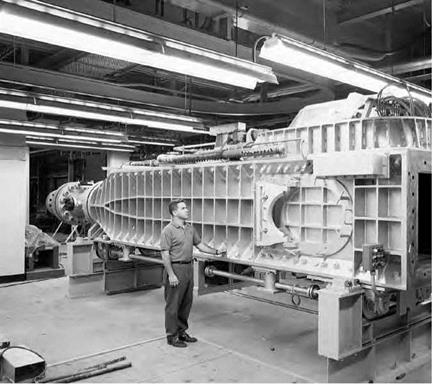

The Continuous Flow Hypersonic Tunnel at Langley in 1961. NASA. |

concept from 1965 that a high-velocity explosive, or shaped, charge developed for military use be used for the acceleration of shock waves. Voitenko’s compressor consisted of a shaped charge, a malleable steel plate, and the test gas. At detonation, the shaped charge exerts pressure on the steel plate to drive it and the test gas forward. Researchers at the Ames Laboratory adapted the Voitenko compressor concept to a selfdestroying shock tube comprised of a 66-pound shaped charge and a glass-walled tube 1.25 inches in diameter and 6.5 feet long. Observation of the tunnel in action revealed that the shock wave traveled well ahead of the rapidly disintegrating tube. The velocities generated upward of

220,0 feet per second could not be reached by any other method.[589]

Langley, building upon a rich history of research in high-speed flight, started work on two tunnels at the moment of transition from the NACA

to NASA. Eugene Love designed the Continuous Flow Hypersonic Tunnel for nonstop operation at Mach 10. A series of compressors pushed highspeed air through a 1.25-inch square nozzle into the 31-inch square test section. A 13,000-kilowatt electric resistance heater raised the air temperature to 1,450 °F in the settling chamber, while large water coolers and channels kept the tunnel walls cool. The tunnel became operational in 1962 and became instrumental in study of the aerodynamic performance and heat transfer on winged reentry vehicles such as the Space Shuttle.[590]

The 8-Foot High-Temperature Structures Tunnel, opened in 1967, permitted full-scale testing of hypersonic and spacecraft components. By burning methane in air at high pressure and through a hypersonic nozzle in the tunnel, Langley researchers could test structures at Mach 7 speeds and at temperatures of 3,000 °F. Too late for the 1960s space program, the tunnel was instrumental in the testing of the insulating tiles used on the Space Shuttle.[591]

NASA researchers Richard R. Heldenfels and E. Barton Geer developed the 9- by 6-Foot Thermal Structures Tunnel to test aircraft and missile structural components operating under the combined effects of aerodynamic heating and loading. The tunnel became operational in 1957 and featured a Mach 3 drive system consisting of 600-psia air stored in a tank farm filled by a high-capacity compressor. The spent air simply exhausted to the atmosphere. Modifications included additional air storage (1957), a high-speed digital data system (1959), a subsonic diffuser (1960), a Topping compressor (1961), and a boost heater system that generated 2,000 °F of heat (1963). NASA closed the 9- by 6-Foot Thermal Structures Tunnel in September 1971. Metal fatigue in the air storage field led to an explosion that destroyed part of the facility and nearby buildings.[592]

NASA’s wind tunnels contributed to the growing refinement of spacecraft technology. The multiple design changes made during the transition from the Mercury program to the Gemini program and the need for more information on the effects of angle of attack, heat transfer, and surface pressure resulted in a new wind tunnel and flight-test program. Wind tunnel tests of the Gemini spacecraft were conducted in the range

of Mach 3.51 to 16.8 at the Langley Unitary Plan and tunnels at AEDC and Cornell University. The flight-test program gathered data from the first four launches and reentries of Gemini spacecraft.[593] Correlation revealed that both independent sets of data were in agreement.[594]